This week, I implemented a character-level recurrent neural network (or char-rnn for short) in PyTorch, and used it to generate fake book titles. The code, training data, and pre-trained models can be found on my GitHub repo.

Me the Bean

Be the Life

Yours

Model Overview

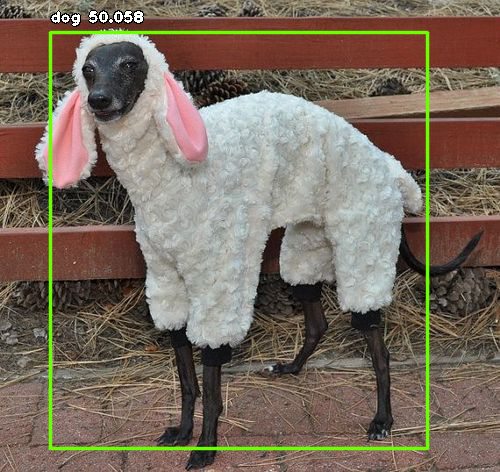

The char-rnn language model is a recurrent neural network that makes predictions on the character level. In contrast, many language models operate on the word level.

Making character-level predictions can be a bit more chaotic, but might be better for making up fake words (e.g. Harry Potter spells, band names, fake slang, fake cities, fantasy terms, etc.). Word-level language models might have an advantage for generating longer pieces of text, like summaries or fiction, as they don’t need to figure out how to spell, in a sense.

There do exist character-word hybrid approaches. For example, the GPT-2 model uses byte pair encoding, an approach that interpolates between the word-level for common sequences and the character-level for rare sequences.

This particular char-rnn implementation is set up to handle multiple categories of text. In this use case, it is able to make predictions for different book genres, e.g. Romance, Fantasy, Young Adult, etc.

Training Data

The training data used for this model is a modified version of a Goodreads data scrape of 20K book titles. I transformed the CSV file into separate text files for the top 30 genres. The resulting split dataset can be found in my Github repo.

GPU training time with this model took about 20 minutes on an NVIDIA GeForce GTX 1080 Ti. Generating samples only takes a few seconds.

Results

The following results are a selected sampling of outputs. Note that I’m mainly including examples that consist of real words, with a few exceptions.

Romance

Years of the Dark

You the Book

The Stove to the Story

Fantasy

Book of the Dark

Red Sande

Fiction

Jen the Bead

King the Bean

Historical

Other and Story

Science Fiction

Voringe

In the Beantire

Mystery

Kiss of the Dark

Red Story

Classics

Gorden the Story of Merica

Childrens

Late

Story of the Bean

Paranormal

Red Store

Stariss and Storiss

Wind Store

New Adult

Growing Me

In the Bean

Me the Bean

Poetry

Me

Erotica

King of the Dark

Dork of the Dark

Work of the Dark

Bed Storys of the Dark

Your Mind

Biography

On Anger and Of Mand Anger

Comically, there are many book titles that revolve around beans, beads, stores, and darkness. While I did notice some subtle differences between genres, it doesn’t appear to be particularly drastic overall.